Imagine driving on a remote mining road, kilometers away from civilization, where massive haul trucks weighing hundreds of tons thunder past every few minutes. These roads need to be incredibly strong and durable, yet they’re often built from waste rock, the material excavated during mining operations that doesn’t contain valuable minerals. The challenge is ensuring these roads can withstand punishment while keeping construction costs reasonable. This is where an unlikely hero enters the scene: machine learning.

The Problem with Traditional Road Testing

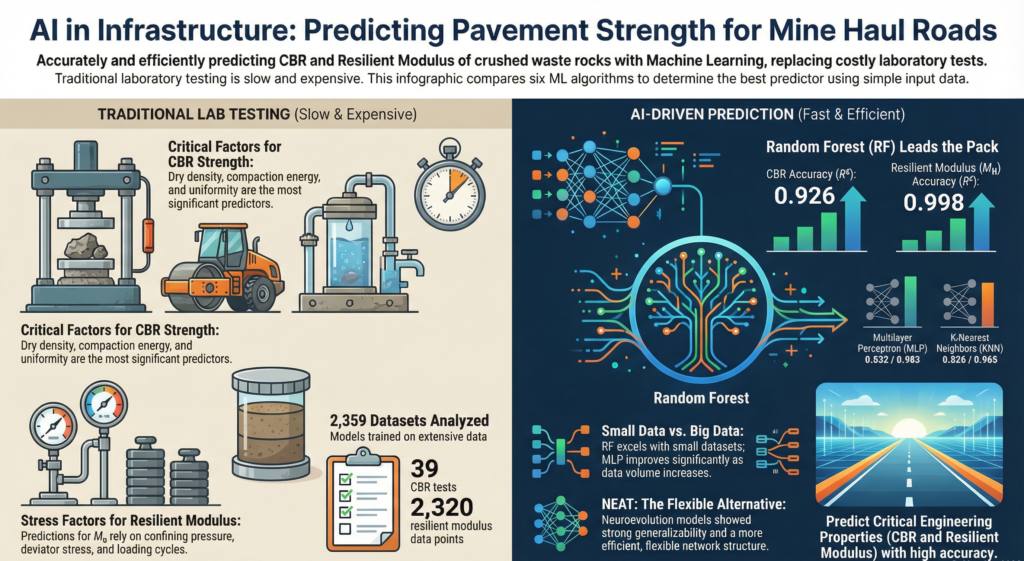

When engineers design roads, whether for mining operations or city streets, they need to know two critical properties of the materials they’re using. The first is something called the California Bearing Ratio, or CBR for short. Think of CBR as a strength test, it tells you how much force the material can handle before it starts to fail. The second property is the resilient modulus, which measures how stiff the material is and how well it bounces back after being compressed by traffic.

Traditionally, measuring these properties requires expensive laboratory equipment and specialized technicians. For CBR testing, engineers take samples of the crushed rock, compact them carefully, and then push a piston into the material to see how much force it takes. For resilient modulus, they perform what’s called repeated load triaxial testing, where they squeeze samples thousands of times to simulate years of traffic loading. Each test can take days to complete and costs hundreds or thousands of dollars.

Here’s the real kicker: mining haul roads typically only last a few years before they need to be rebuilt or relocated as the mine expands. Spending weeks testing materials for a road that might only be used for three to five years doesn’t make much economic sense. This creates a dilemma, engineers need reliable data to design safe roads, but the traditional testing methods are often too expensive and time-consuming for short-lived mining infrastructure.

The Study

A recent study by researchers at Polytechnique Montreal tackled this problem head-on by asking a simple question: could machine learning predict these crucial pavement properties without running expensive lab tests? The researchers, Shengpeng Hao and Thomas Pabst, tested six different machine learning algorithms to see which ones could most accurately predict CBR and resilient modulus based on simpler, easier-to-measure properties.

The study focused on crushed waste rocks from Canadian Malartic Mine, an open-pit gold mine in Quebec. These rocks are typical of what you’d find at most hard rock mines, angular particles with rough surfaces, containing about 65% gravel, 30% sand, and less than 5% fine particles. The material is essentially well-graded gravel, perfect for road construction if you can figure out how it will perform.

The Six Contenders

The researchers compared six different machine learning approaches, each with its own strengths and quirks. Multiple Linear Regression, or MLR, is the simplest approach, it assumes there’s a straight-line relationship between input factors like density and the output properties like CBR. While this method is easy to understand and use, it struggles with complex relationships that don’t follow simple patterns.

K-Nearest Neighbors, known as KNN, takes a different approach. Imagine you have a database of tested materials, and you want to predict the properties of a new, untested sample. KNN looks at the most similar samples in your database and essentially says, “Well, if this new sample is very similar to these three samples we’ve already tested, it will probably behave similarly.” The researchers found that using just two neighbors gave the best predictions, any more than that, and the accuracy started to drop.

Decision Trees work like a flowchart of questions. The algorithm asks: “Is the dry density greater than 2,300 kilograms per cubic meter? If yes, go this way. If no, go that way.” It keeps splitting the data based on these questions until it arrives at a prediction. For the CBR predictions, the decision tree only needed to ask two layers of questions, with dry density being the most important factor.

Random Forest is like having a committee of decision trees voting on the answer. Instead of relying on one decision tree, it creates many trees (twenty for CBR, fifteen for resilient modulus in this study) and averages their predictions. This approach turned out to be remarkably effective, consistently providing the most accurate predictions.

Multilayer Perceptron, or MLP, is what most people think of when they hear “neural network.” It’s inspired by how neurons in the brain work, with layers of interconnected nodes that process information. The challenge with MLP is figuring out the right architecture, too few layers or neurons and it can’t capture complex patterns; too many and it starts overfitting, essentially memorizing the training data rather than learning general patterns.

Finally, there’s NEAT, which stands for Neuroevolution of Augmenting Topologies. This is the most exotic of the bunch. Instead of a human deciding how many layers and connections the neural network should have, NEAT uses principles from biological evolution to grow and optimize the network structure automatically. It starts simple and gradually adds complexity over thousands of generations, keeping what works and discarding what doesn’t.

What Goes In: Choosing the Right Inputs

Before any machine learning algorithm can make predictions, it needs to know what factors matter. For CBR, the researchers identified eight key properties through correlation analysis. Dry density emerged as the star player, with a correlation coefficient of 0.90, meaning it had a very strong relationship with CBR. This makes intuitive sense, denser materials are generally stronger. Compaction energy, fines content, and various measures of particle size distribution also proved important.

Interestingly, two factors that engineers might expect to matter, water content and a specific particle size parameter called D30, turned out to have weak correlations with CBR. The researchers wisely excluded these from their models, demonstrating an important principle in machine learning: more data isn’t always better if that data doesn’t actually help explain what you’re trying to predict.

For resilient modulus, the inputs were different and simpler. Since the researchers were working with laboratory test data where they controlled the testing conditions, they focused on three factors: the number of loading cycles (how many times the material had been squeezed), confining pressure (how much lateral squeeze was applied), and deviator stress (how much vertical load was applied). The study found that resilient modulus increased with both confining pressure and deviator stress, which aligns with what engineers have observed in other materials—granular materials tend to get stiffer when you squeeze them harder.

The Training Process: Teaching Machines to Predict

Training a machine learning model is a bit like teaching someone to estimate the weight of objects by feel. You start by showing them lots of examples, “This rock weighs two kilograms, this one weighs five kilograms” and they gradually learn to make educated guesses about new objects based on patterns in what they’ve learned.

The researchers split their data carefully. For CBR, they had thirty-nine laboratory test results, a relatively small dataset. They used 70% of this data (twenty-seven tests) to train the models and held back 30% (twelve tests) to check if the models could accurately predict results they’d never seen before. For resilient modulus, they had a much larger dataset of 2,320 measurements from repeated load triaxial tests, allowing them to use 1,624 for training and 696 for testing.

To prevent overfitting, where a model essentially memorizes training data rather than learning general patterns, they used a technique called 5-fold cross-validation. This involves splitting the training data into five parts, training on four parts while validating on the fifth, then rotating which part is used for validation. It’s like a student studying for an exam by taking five different practice tests, each covering slightly different material, to make sure they understand the concepts rather than just memorizing answers.

The Results

When the dust settled, Random Forest emerged as the champion. For CBR prediction, the RF model achieved a coefficient of determination (R²) greater than 0.9, meaning it could explain more than ninety percent of the variation in CBR values. The mean squared error, essentially the average size of prediction mistakes, was impressively low at around 300 for the testing data.

For resilient modulus, Random Forest was even more dominant, with R² values exceeding 0.99 and mean squared errors below 20. To put this in perspective, if the actual resilient modulus was 300 MPa, the model’s prediction would typically be within about 4 MPa, that’s better than 98% accuracy.

MLP also performed well, particularly for resilient modulus where the larger dataset gave it enough information to properly train its neural network architecture. The researchers found that the optimal MLP structure for resilient modulus needed five hidden layers with forty neurons each, a relatively deep network that could capture complex nonlinear relationships. For CBR, with its smaller dataset, a simpler network with just one hidden layer of twenty neurons worked best.

NEAT produced interesting results. While it didn’t achieve the highest accuracy, it demonstrated excellent generalizability, meaning its performance on new, unseen data was actually slightly better than on the training data. This is unusual and valuable. NEAT also produced remarkably simple network structures, with just eight hidden neurons for CBR and four for resilient modulus, compared to MLP’s much larger networks.

The simpler approaches struggled more. Multiple Linear Regression, assuming straight-line relationships, achieved R² values around 0.7 to 0.8 for CBR and similar values for resilient modulus. This makes sense when you consider that the behavior of crushed rock under loading is inherently nonlinear and complex, simple linear equations just can’t capture all the nuances.

Why Random Forest Won

The Random Forest success story offers important insights. This algorithm essentially builds expertise through diversity, each decision tree in the forest might make slightly different mistakes, but by averaging their predictions, these mistakes tend to cancel out. It’s similar to how polling multiple experts usually gives you a better answer than asking just one person.

Random Forest also proved particularly robust when working with the small CBR dataset. Many machine learning algorithms need large amounts of data to work well, but Random Forest’s ensemble approach, combining multiple simple models, allowed it to extract reliable patterns even from limited information. This is crucial for practical applications where collecting extensive test data might not be feasible.

The sensitivity analysis revealed interesting patterns about what factors truly matter. For CBR, dry density dominated in the Random Forest model, with a sensitivity value of 0.98. This means that variations in dry density accounted for almost all the model’s predictive power. The MLP model, interestingly, identified compaction energy as the most important factor, which makes sense because compaction energy largely determines the final dry density of the material.

For resilient modulus, both Random Forest and MLP agreed that deviator stress was the king, with sensitivity values exceeding 0.8. Confining pressure came in second with sensitivities around 0.3 to 0.4. Surprisingly, the number of loading cycles showed minimal influence on the predictions, suggesting that for these crushed waste rocks, the material reached its characteristic stiffness relatively quickly and didn’t change much with repeated loading.

Real-World Applications and Limitations

These findings have immediate practical value for mining operations. Instead of spending weeks running expensive laboratory tests on every batch of waste rock, engineers could potentially measure simpler properties like dry density, particle size distribution, and compaction energy, then use the Random Forest model to predict CBR and resilient modulus. This could save thousands of dollars per road segment and dramatically speed up the design process.

However, the researchers were appropriately cautious about limitations. All their data came from one mine, Canadian Malartic in Quebec. While the waste rocks there are typical of hard rock mines, geological variability means that rocks from a copper mine in Chile or a nickel mine in Australia might behave differently. The models would need to be retrained or validated with local materials before being applied elsewhere.

The study also highlighted the fundamental challenge of limited data. With only thirty-nine CBR tests available, even the best machine learning algorithms struggled to achieve the same accuracy as they did for resilient modulus, where thousands of measurements were available. This underscores an important principle: machine learning isn’t magic. It can find patterns in data, but it needs sufficient, high-quality data to learn from.

Looking to the Future

The success of this research opens exciting possibilities. As mines around the world collect more data on their waste rock properties, models could be continually updated and improved. Imagine a global database where mines share anonymized test results, allowing machine learning models to learn from materials across diverse geological settings.

NEAT deserves special mention for future applications. While it didn’t win the accuracy contest, its ability to automatically evolve network architecture and select important input features is valuable. In complex engineering problems where humans might not know which factors are most important, NEAT could explore the solution space more thoroughly than approaches that require human decisions about model structure.

The researchers suggest that next steps should include testing these models on waste rocks with different characteristics, varying gradations, water contents, and fines contents. This would help determine how well the models generalize beyond the specific materials tested at Canadian Malartic Mine.

Conclusion

This study represents a small but significant step in a larger transformation happening across engineering fields. Machine learning is increasingly complementing, and sometimes replacing, traditional empirical approaches that relied on extensive laboratory testing. This doesn’t mean engineers will stop doing lab tests, validation and understanding material behavior remain crucial—but it does mean we can be smarter about when and what we test.

For mining operations, where profit margins are often tight and operational demands are intense, anything that can reduce costs while maintaining safety standards is valuable. If machine learning models can reliably predict pavement properties, mines can design better roads faster and cheaper, potentially extending equipment life, reducing maintenance costs, and improving safety for the operators driving those massive haul trucks.

The research also demonstrates that the choice of algorithm matters. There’s no universal “best” machine learning approach: Random Forest excelled here, but different problems might favor different solutions. The key is testing multiple approaches and honestly evaluating their performance, exactly as these researchers did.

As we move forward, the integration of machine learning into geotechnical engineering will likely accelerate. The algorithms are getting better, computing power continues to increase, and the amount of available data keeps growing. Studies like this one, methodically comparing different approaches and honestly reporting both successes and limitations, provide the foundation for confident, responsible adoption of these powerful tools.

The next time you read about autonomous haul trucks or smart mining operations, remember that underneath those flashy applications are quieter innovations like this one, using machine learning to solve fundamental engineering challenges, one crushed rock sample at a time.

Reference

- Hao, S., Pabst, T. Prediction of CBR and resilient modulus of crushed waste rocks using machine learning models. Acta Geotech. 17, 1383–1402 (2022). https://doi.org/10.1007/s11440-022-01472-1